The Future of MCP and Context Engineering

I don’t like MCP servers. I understand the promise of convenience, how tool “discovery” works, and the ability to be plug and play. These characteristics are attractive and undoubtedly apply perfectly to certain use cases like Claude Code, Codex, etc.

Connecting your first MCP to your favorite code assistant feels like magic. One command and you have conversational access to a whole new system. That works until page 2. Using an MCP server is the act of putting more stuff into your agent’s context window (most innovations in agents are simply messing with the context window).

The MCP client requests the available tool definitions from the MCP server and injects them into the agent’s context. These definitions contain the tool descriptions and instructions on when and how to use them. In other words, if you connect an agent to a server with 10 tools, 10 different descriptions and instructions get included in your context window. Five MCP servers with 10 tools each mean 50 tool definitions and 50 different instructions.

MCPs also have some problems when it comes to authentication, but that’s for another post.

Attempts to mitigate the context window problem

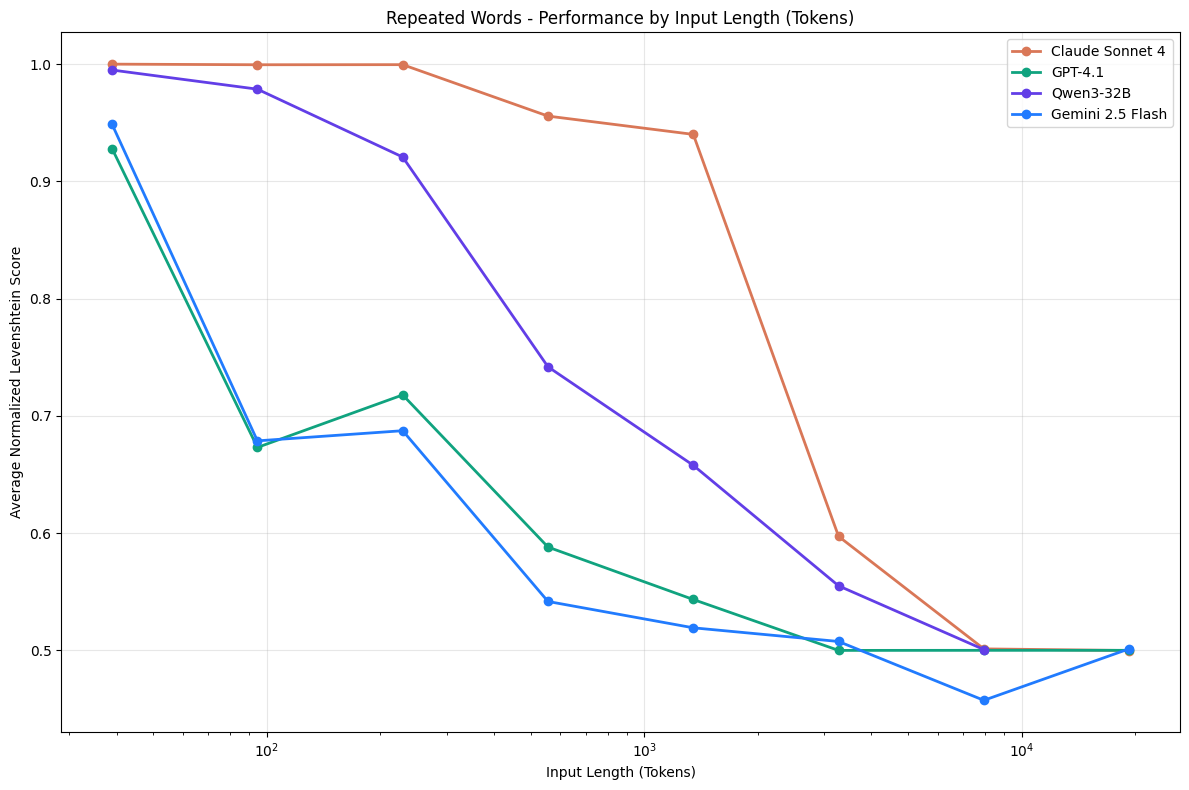

This problem of stuffing too much into the context window has already been identified by several companies and AI labs. I really like a study by Chroma where the concept of Context Rot is proposed. The central idea is simple: more input tokens result in worse performance. I recommend reading it.

AI labs and companies building agents have made proposals to “save” context window space by changing how tools are used. Cloudflare launched what they call MCP code mode, and Anthropic itself, the creator of MCP, also found a way to work around this problem through Code Execution with MCP.

Both approaches follow the same line of reasoning: expose MCP tools as code APIs and provide a code execution tool to the agent, which can call these APIs to operate on MCP results through code, without observing the tool outputs directly.

These approaches are quite interesting, but still don’t solve the problem of inserting all tool definitions into your agent’s context. They only offer a smarter way to operate on the return values of your MCP server tools or any other set of tools in your agent. They are MCP-agnostic.

Progressive disclosure

Progressive disclosure is a way of dispatching or storing relevant information outside the agent’s context window.

There are two essential components in progressive disclosure:

- An external storage mechanism

- Read and write tools for that storage mechanism

The most human-intelligible storage mechanism is a file system. And the best part: there are already many tools for manipulating text files, all through a terminal. Commands like grep, glob, and cat are common for manipulating text in file systems, and language models know how to use them because they appear extensively in training data. It’s as if language models are really good at doing tasks that have already been solved. I wonder why?

With a file system, you can store instructions and tool usage patterns as text files and code scripts, like Python scripts, for example. Give your agent access to the terminal and certain commands, and you enable it to discover how to solve a problem by exploring its own capabilities, which are described in the file system as text.

The idea of using text files as on-demand memory is the foundation of the concept of skills in context engineering. Skills allow you to separate different abilities an agent can have. A skill is simply a file or a set of files containing instructions and scripts. The essential part is just a set of instructions. There are skills that have no scripts and are still a valid pattern, because the main purpose of the skill concept is the ability to load specific instructions for a given task on demand. As if the agent “remembered” or “discovered” how to solve the task. In reality, the person who wrote the skill is the one who solved it.

Progressive disclosure has inspired innovative methods like Recursive Language Models.

Three possibilities

Going forward, I see three possible paths for using MCP without exhausting our context window:

- Don’t use MCP. Something better will be invented.

- The way MCP servers are used will change drastically for complex use cases. Simpler use cases will keep using the traditional approach, but any more complex agent will apply various techniques to avoid exhausting the context window.

- Every agent will use a tool search tool.

On point 3: Anthropic and GitHub Copilot already optimize which tools are selected based on the task. The common and simple approach is to index tool descriptions and search for the most relevant tools for each task, probably through semantic similarity and keyword search. I haven’t built a tool search tool myself, so I can’t say what works best.

Deal breaker

For me, there’s one blocker that creates a lot of inertia around adopting MCP servers the traditional way: tool definitions are written by someone else. When the agent initializes and loads the tool definitions from the MCP server, a prompt from someone else, who doesn’t take your use case into account, is entering your context window. Maybe I’m being too paranoid, too much of a purist, and overestimating the impact of tool definitions on agent performance. Yeah, probably.

But the context window must be treated with this level of care. At the most fundamental level, it is the MOST POWERFUL way to control your agent’s behavior without modifying the model weights. It’s crucial to ensure that tools are of high quality. You have to think about what to return when an operation fails and how to insert instructions in tool returns so the tool ecosystem works well. For example, a web search tool might contain the following instruction in its return:

...use the read_web_page tool to read the page found by the search

This includes new instructions so the tool ecosystem works in harmony. It’s a detail that sometimes isn’t even necessary, but it’s important if you’re aiming for maximum performance.

So there’s a balance when using MCP servers: it’s easy to give your agent new tools, but if done naively, you’ll pollute your agent’s context.

I personally think MCP as we know it is going to die.